this post was submitted on 18 Jul 2023

129 points (100.0% liked)

196

16489 readers

1836 users here now

Be sure to follow the rule before you head out.

Rule: You must post before you leave.

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

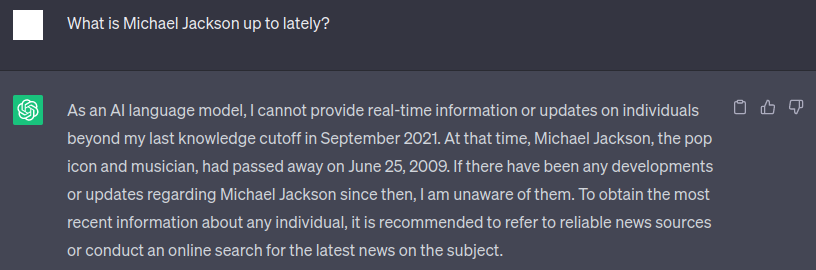

Is it a natural response that a human would give? No.

Is it an overly cautious response that still gives correct information? Yes.

That's kind of my point. AI's have no concept of time and even less of death and they "think" (in a metaphorical sense) very differently from us. I think it's good to be aware of that and since this is a meme page, I don't even need a real point. I think it's funny even tho I fully understand the underlying process (I obviously don't understand the process in detail but you get what I mean)

Interestingly gpt4 gives a slightly different response:

I'm sorry, but as of my last update in September 2021, Michael Jackson passed away in June 2009. I am unable to provide any recent updates or information beyond that date. If there have been any posthumous releases or tributes, I would recommend checking the latest news sources for the most up-to-date information.

Honestly not sure if it’s better or worse. It could be better in that it realizes the absurdity in asking what he’s up to and assumes you must be talking about posthumous music releases instead.

Could be worse in that it should know we’re obviously talking about the person and not his music and shouldn’t jump to that conclusion.

But that’s honestly even a tough call for a human. If someone asks a strange question like that, do you assume they mean exactly what they speak or do you assume what they speak is not what they actually mean and then adjust the answer to fit what they probably mean?

The rest of the way the answer is structured just comes out of the system prompt telling it to remind the user of its cutoff date.

I’m not trying to argue and I’m not saying you’re wrong or right, I’m just kind of thinking out loud.

But as it is a meme, yeah gpt what the hell, who knows lol

Interesting! I recently heard the phrasing that AI's aren't intelligent but it's better to think of them as applied stochastic. It does not "understand" the question, it just calculates the most properly answer. That's why they suck at suggestive questions sometimes.

And there is a second AI at play that's important here. The main AI just knows stuff, but doesn't reflect if the answer is appropriate. The second is trained on real people who interact with chatGPT and give feedback on the output.

So it doesn't mention the cutoff because it's self reflexive but because of the second AI that learned not to be too sure about real people's latest developments and didn't learn to differentiate between the living and the dead. I think this is a good way to illustrate this process.

It's a very clever predictive text :)

keep in mind also that gpt-3.5 and beyond is instruction-trained, so if you give it a task, even implied, it will really want to accomplish that task, unless that goes against some other training. by asking "what is Michael Jackson up to today" you're putting an expectation on it to produce a correct answer, or as close to as it can manage, hence the attempt to go into recent developments or posthumous releases. it's trying to be useful even in situations when it cannot provide anything of value.

this is a bit more important when you're doing prompt engineering, because if you give it two options, one to actually answer a question and one to indicate that it cannot answer under these circumstances, it will have a strong bias against the latter option. if you ask it to improve a sentence or say it's already perfect, for example, it will have a clear aversion to saying it's already perfect. you should usually just ask it to do the task unconditionally, then run a second pass to choose between the two options, because in those cases the text suggests that some task has been accomplished.

Literally 1845