cross-posted from: https://sh.itjust.works/post/998307

Hi everyone. I wanted to share some Lemmy-related activism I’ve been up to. I got really interested in the apparent surge of bot accounts that happened in June. Recently, I was able to play a small part in removing some of them. Hopefully by getting the word out we can ensure Lemmy is a place for actual human users and not legions of spam bots.

First some background. This won't be new to many of you, but I'll include it anyway. During the week of June 18 to June 25, as the Reddit migration to Lemmy was in full swing, there was a surge of suspicious account creation on Lemmy instances that had open registration and no captcha or email verification. Hundreds of thousands of accounts appeared and then sat inactive. We can only guess what they’re for, but I assume they are being planted for future malicious use (spamming ads, subversive electioneering, influencing upvotes to drive content to our front pages, etc.)

If you look at the stats on The Federation you might notice that even the shape of the Total Users graphs are the same across many instances. User numbers ramped up on June 18, grew almost linearly throughout the week, and peaked on June 24. (I’m puzzled by the slight drop at the end. I assume it's due to some smoothing or rate-sensitive averaging that The Federation uses for the graphs?)

Here are total user graphs for a few representative instances showing the typical shape:

Clearly this is suspicious, and I wasn’t the only one to notice. Lemmy.ninja documented how they discovered and removed suspicious accounts from this time period: (https://lemmy.ninja/post/30492). Several other posts detailed how admins were trying to purge suspicious accounts. From June 24 to June 30 The Federation showed a drop in the total number of Lemmy users from 1,822,313 to 1,589,412. That’s 232,901 suspicious accounts removed! Great success! Right?

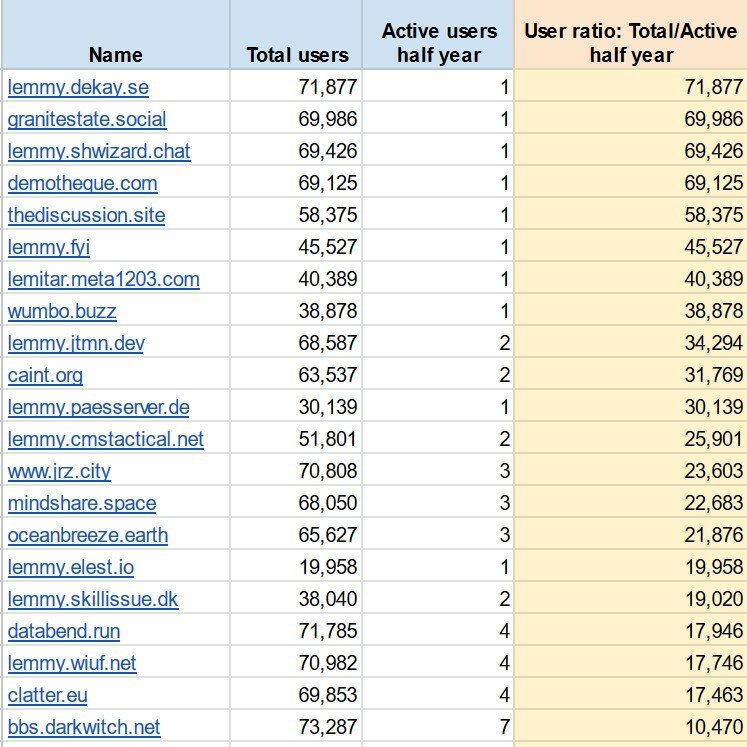

Well, no, not yet. There are still dozens of instances with wildly suspicious user numbers. I took data from The Federation and compared total users to active users on all listed instances. The instances in the screenshot below collectively have 1.22 million accounts but only 46 active users. These look like small self-hosted instances that have been infected by swarms of bot accounts.

As of this writing The Federation shows approximately 1.9 million total Lemmy accounts. That means the majority of all Lemmy accounts are sitting dormant on these instances, potentially to be used for future abuse.

This bothers me. I want Lemmy to be a place where actual humans interact. I don’t want it to become another cesspool of spam bots and manipulative shenanigans. The internet has enough places like that already.

So, after stewing on it for a few days, I decided to do something. I started messaging admins at some of these instances, pointing out their odd account numbers and referencing the lemmy.ninja post above. I suggested they consider removing the suspicious accounts. Then I waited.

And they responded! Some admins were simply unaware of their inflated user counts. Some had noticed but assumed it was a bug causing Lemmy to report an incorrect number. Others weren’t sure how to purge the suspicious accounts without nuking their instances and starting over. In any case, several instance admins checked their databases, agreed the accounts were suspicious, and managed to delete them. I’m told that the lemmy.ninja post was very helpful.

Check out these early results!

Awesome! Another 144k suspicious accounts are gone. A few other admins have said they are working on doing the same on their instances. I plan to message the admins at all the instances where the total accounts to active users ratio is above 10,000. Maybe, just maybe, scrubbing these suspected bot accounts will reduce future abuse and prevent this place from becoming the next internet cesspool.

That’s all for now. Thanks for reading! Also, special thanks to the following people:

@[email protected] for your helpful post!

@[email protected], @[email protected], and @[email protected] for being so quick to take action on your instances!