this post was submitted on 16 Jul 2023

1270 points (98.8% liked)

Programmer Humor

32361 readers

832 users here now

Post funny things about programming here! (Or just rant about your favourite programming language.)

Rules:

- Posts must be relevant to programming, programmers, or computer science.

- No NSFW content.

- Jokes must be in good taste. No hate speech, bigotry, etc.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

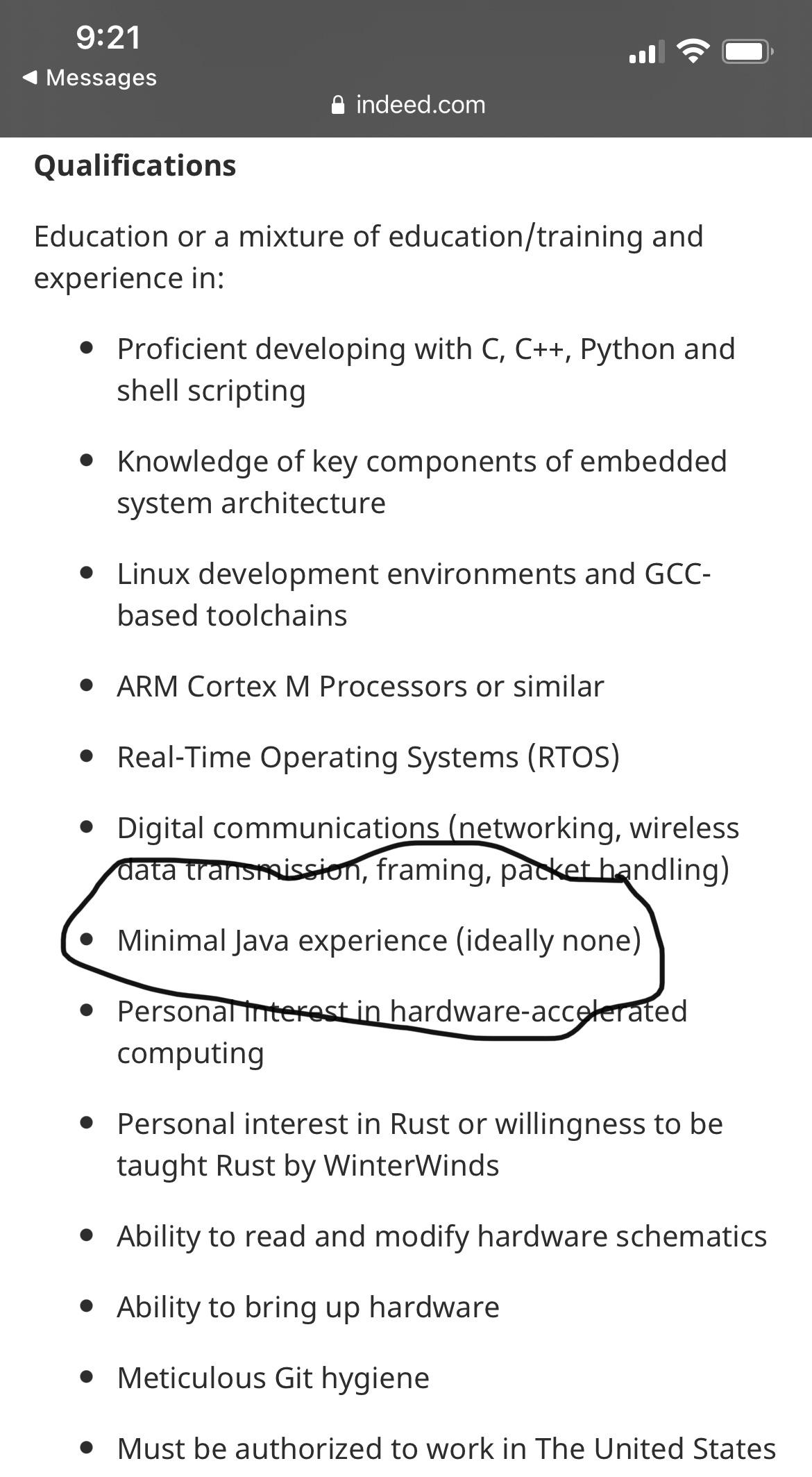

I know the guy meant it as a joke but in my team I see the damage "academic" OOP/UML courses do to a programmer. In a library that's supposed to be high-performance code in C++ and does stuff like solving certain PDEs and performing heavy Monte-Carlo simulations, the guys with OOP/UML background tend to abuse dynamic polymorphism (they put on a pikachu face when you show them that there's also static polymorphism) and write a lot of bad code with lots of indirections and many of them aren't aware of the fact that virtual functions and

dynamic_cast's have a price and an especially ugly one if you use them at every step of your iterative algorithm. They're usually used to garbage collectors and when they switch to C++ they become paranoiac and abuseshared_ptr's because it gives them peace of mind as the resource will be guaranteed to be freed when it's not needed anymore and they don't have to care about when that is the case, they obviously ignore that under the hood there are atomics when incrementing the ref counter (I removed the shared pointers of a dev who did this in our team and our code became twice as fast). Like the guy in the screenshot I certainly wouldn't want to have someone in my team who was molded by Java and UML diagrams.Depends on the requirements. Writing the code in a natural and readable way should be number one.

Then you benchmark and find out what actually takes time; and then optimize from there.

At least thats my approach when working with mostly functional languages. No need obsess over the performance of something thats ran only a dozen times per second.

I do hate over engineered abstractions though. But not for performance reasons.

You need to me careful about benchmarking to find performance problems after the fact. You can get stuck in a local maxima where there is no particular cost center buts it’s all just slow.

If performance specifically is a goal there should probably at least be a theory of how it will be achieved and then that can be refined with benchmarks and profiling.

I mean, even there it depends what you're doing. A small matrix multiplication library should be fast even if it makes the code uglier. For most coders you're right, though.

Even then you can take some effort to make it easier to parse for humans.

Oh, absolutely. It's just the second most important thing.

You can add tons of explanatory comments with zero performance cost.

Also in programming in general (so, outside stuff like being a Quant) the fraction of the code made which has high performance as the top priority is miniscule (and I say this having actually designed high-performance software systems for a living) - as explained earlier by @ForegoneConclusion, you don't optimize upfront, you optimized when you figure out it's actually needed.

Thinking about it, if you're designing your own small matrix multiplication library (i.e. reinventing the wheel) you're probably failing at a software design level: as long as the licensing is compatible, it's usually better to get something that already exists, is performance oriented and has been in use for decades than making your own (almost certainly inferior and with fresh new bugs) thing.

PS: Not a personal critical - I too still have to remind myself at times to not just reinvent that which is already there. It's only natural for programmers to trust their own skills above whatever random people did some library and to want to program rather than spend time evaluating what's out there.

I thought of this example because a fundamental improvement was actually made with the help of AI recently. 4x4 in specific was improved noticeably IIRC, and if you know a bit about matrix multiplication, that ripples out to large matrix algorithms.

I would not actually try this unless I had a reason to think I could do better, but I come from a maths background and do have a tendency to worry about efficiency unnecessarily.

I think in most cases (matrix multiplication being probably the biggest exception) there is a way to write an algorithm that's easy to read, especially with comments where needed, and still approaches the problem the best way. Whether it's worth the time trying to build that is another question.

In my experience we all go through a stage at the Designed-Developer level of, having discovered things like Design Patterns, overengineering the design of the software to the point of making it near unmaintainable (for others or for ourselves 6 months down the line).

The next stage is to discover the joys of KISS and, like you described, refraining from premature optimization.

I think many academic courses are stuck with old OOP theories from the 90s, while the rest of the industry have learned from its failures long time ago and moved on with more refined OOP practices. Turns out inheritance is one of the worst ways to achieve OOP.

I think a lot of academic oop adds inheritance for the heck of it. Like they're more interested in creating a tree of life for programming than they are in creating a maintainable understandable program.

OOP can be good. The problem is that in Java 101 courses it’s often taught by heavily using inheritance.

I think inheritance is a bad representation of how stuff is actually built. Let’s say you want to build a house. With the inheritance way of thinking you’re imagining all possible types of buildings you can make. There’s houses, apartment buildings, warehouses, offices, mansions, bunkers etc.. Then you imagine how all these buildings are related to each other and start to draw a hierarchy.

In the end you’re not really building a house. You’re just thinking about buildings as an abstract concept. You’re tasked to build a basic house, but you are dreaming about mansions instead. It’s just a curious pastime for computer science professors.

A more direct way of building houses is to think about all the parts it’s composed of and how they interact with each other. These are the objects in an OOP system. Ideally the objects should be as independent as possible.

This concept is called composition over inheritance.

For example, you don’t need to understand all the internals of the toilet to use it. The toilet doesn’t need to be aware of the entire plumbing system for it to work. The plumbing system shouldn’t be designed for one particular toilet either. You should be allowed to install a new improved toilet if you so wish. As long the toilet is compatible with your plumbing system. The fridge should still work even if there’s no toilet in the house.

If you do it right you should also be able to test the toilet individually without connecting it to a real house. Now you suddenly have a unit testable system.

If you ever need polymorphism, you should use interfaces.

This was a nice analogy, thanks for the write-up.

That’s the problem, a lot of CS professors never worked in the industry or did anything outside academia so they never learned those lessons…or the last time they did work was back in the 90s lol.

Doesn’t help that most universities don’t seem to offer “software engineering” degrees and so everyone takes “computer science” even if they don’t want to be a computer scientist.

@einsteinx2 @magic_lobster_party

This is most definitely my experience with a lot of CS professors unfortunately.

There's an alternative system where this doesn't happen: pay university professors less than a living wage.

You do that, and you'll get professors who work in the industry (they have to) and who love teaching (why else would they teach).

I studied CS in country where public university is free and the state doesn't fund it appropriately. Which obviously isn't great, but I got amazing teachers with real world experience.

My son just finished CS in a country with paid and well funded university, and some of the professors were terrible teachers (I watched some of his remote classes during covid) and completely out of touch with the industry. His course on AI was all about Prolog. Not even a mention of neural networks, even while GPT3 was all the rage.

Professors love doing academic research. Teaching is a requirement for them, not a passion they pursue (at least not for most of them).

Yeah, that makes it even worse.

To be clear, I'm not advocating for not paying living wages to professors, I'm just describing the two systems I know and the results.

I don't know how to get teachers who are up to date with industry and love teaching. You get that when teaching doesn't pay, but it'd be nice if there was a better way.

The Design Patterns book itself (for many an OO-Bible) spends the first 70 something pages going all about general good OO programming advice, including (repeatedly emphasised) that OO design should favour delegation over inheritance.

Personally for me (who started programming professionally in the 90s), that first part of the book is at least as important the rest of it.

However a lot of people seemed to have learned Patterns as fad (popularized by oh-so-many people who never read a proper book about it and seem to be at the end of a long chinese-whispers chain on what those things are all about), rather than as a set of tools to use if and when it's appropriate.

(Ditto for Agile, where so many seem to have learned loose practices from it as recipes, without understanding their actual purpose and applicability)

I'll stop ranting now ;)

I fully agree about the damage done at universities. I also fully agree about the teaching professors being out of the game too long or never having been at a level which would be worth teaching to other people. A term which I heard from William Kenned first is 'mechanical sympathy'. IMHO this is the big missing thing in modern CS education. (Ok, add to that the missing parts about proper OOP, proper functional programming and literally anything taught to CS grads but relational/automata theory and mathematics (summary: mathematics) :-P). In the end I wouldn't trust anyone who cannot write Assembler, C and knows about Compiler Construction to write useful low level code or even tackle C++/Rust.

Luckily, i had only one, and the crack who code-golfes in assembler did the work of us three.

That's wild that shared ptr is so inefficient. I thought everyone was moving towards those because they were universally better. No one mentions the performance hit.

Atomic instructions are quite slow and if they run a lot... Rust has two types of reference counted pointer for that reason. One that has atomic reference counting for multithreaded code and one non-atomic for single threaded. Reference counting is usually overkill in the first place and can be a sign that your code doesn't have proper ownership.

I have been writing code professionally for 6ish years now and have no idea what you said