this post was submitted on 21 Oct 2024

524 points (98.0% liked)

Facepalm

2650 readers

648 users here now

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

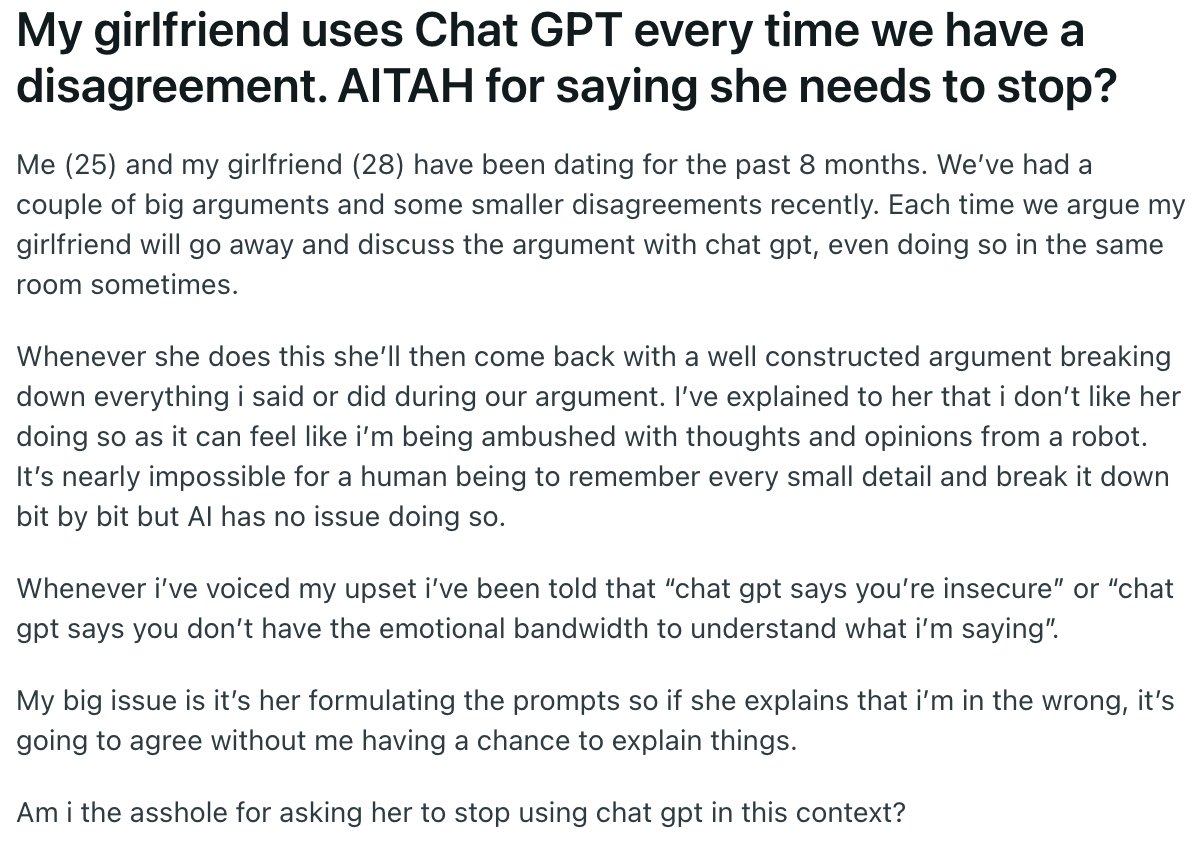

Are you saying it would be preferable if she was given the same advice from a human or read it in a book? This guy cannot defend his point of view because it's probably not particularly defensible, the robot is immaterial.

I'll spell it out for you. Y E S

I'm not going to argue the finer points of how a LLM has literally no concept of human relationships. Or how LLMs give the least effective advice on record.

if you trust a LLM to give anything other than half-baked garbage I genuinely feel sad for any of your current and future partners.

when you have a disagreement in a long-term intimate relationship it's not about who's right or wrong. its about what you and your partner can agree on and disagree on and still respect each other.

I've been married for almost 10 years, been together for over 20, we don't agree on everything. I still respect my partners opinion and trust their judgment with my life.

every good relationship is based on trust and respect. both are concepts foreign to LLMs, but not impossible for a real person to comprehend. this is why getting a second opinion from a 3rd party is so effective. even if it's advice from a book, the idea comes from a separate person.

a good marriage counselor will not choose sides, they aren't there to judge. a counselor's first responsibility is to build a bridge of trust with both members of the relationship to open dialogue between the two as a conduit. they do this by asking questions like, "how did that make you feel?" and "tell me more about why you said that to them."

the goal is open dialogue, and what she is doing by using ChatGPT is removing her voice from the relationship. she's sitting back and forcing the guy to have relationship building discussions with a LLM. now stop, now think about how fucked up that is.

in their relationship he is expressing what he needs from her, "I want to you stop using ChatGPT and just talk to me." she refuses and ignores what he needs. in this scenario we don't know what she needs because he didn't communicate that. the only thing we can assume based on her actions is that she has a need to be "right". what did we learn about relationships and being "right"? it's counterproductive to the goals of a healthy relationship.

my point is, they're both flawed enough and are failing to communicate. neither are right, and introducing LLMs into a broken relationship is not the answer.

Okay so you don't trust the robot to give relationship advice, even if that advice is identical to what humans say. The trouble is we never really know where ideas come from. They percolate up into consciousness, unbidden. Did I speak to a robot earlier? Are you speaking to a robot right now? Who knows. All I know is that when someone I love and respect asks me to explain myself I feel that I should do that no matter what.