26

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

this post was submitted on 21 Sep 2024

26 points (84.2% liked)

Asklemmy

43407 readers

1409 users here now

A loosely moderated place to ask open-ended questions

If your post meets the following criteria, it's welcome here!

- Open-ended question

- Not offensive: at this point, we do not have the bandwidth to moderate overtly political discussions. Assume best intent and be excellent to each other.

- Not regarding using or support for Lemmy: context, see the list of support communities and tools for finding communities below

- Not ad nauseam inducing: please make sure it is a question that would be new to most members

- An actual topic of discussion

Looking for support?

Looking for a community?

- Lemmyverse: community search

- sub.rehab: maps old subreddits to fediverse options, marks official as such

- [email protected]: a community for finding communities

~Icon~ ~by~ ~@Double_[email protected]~

founded 5 years ago

MODERATORS

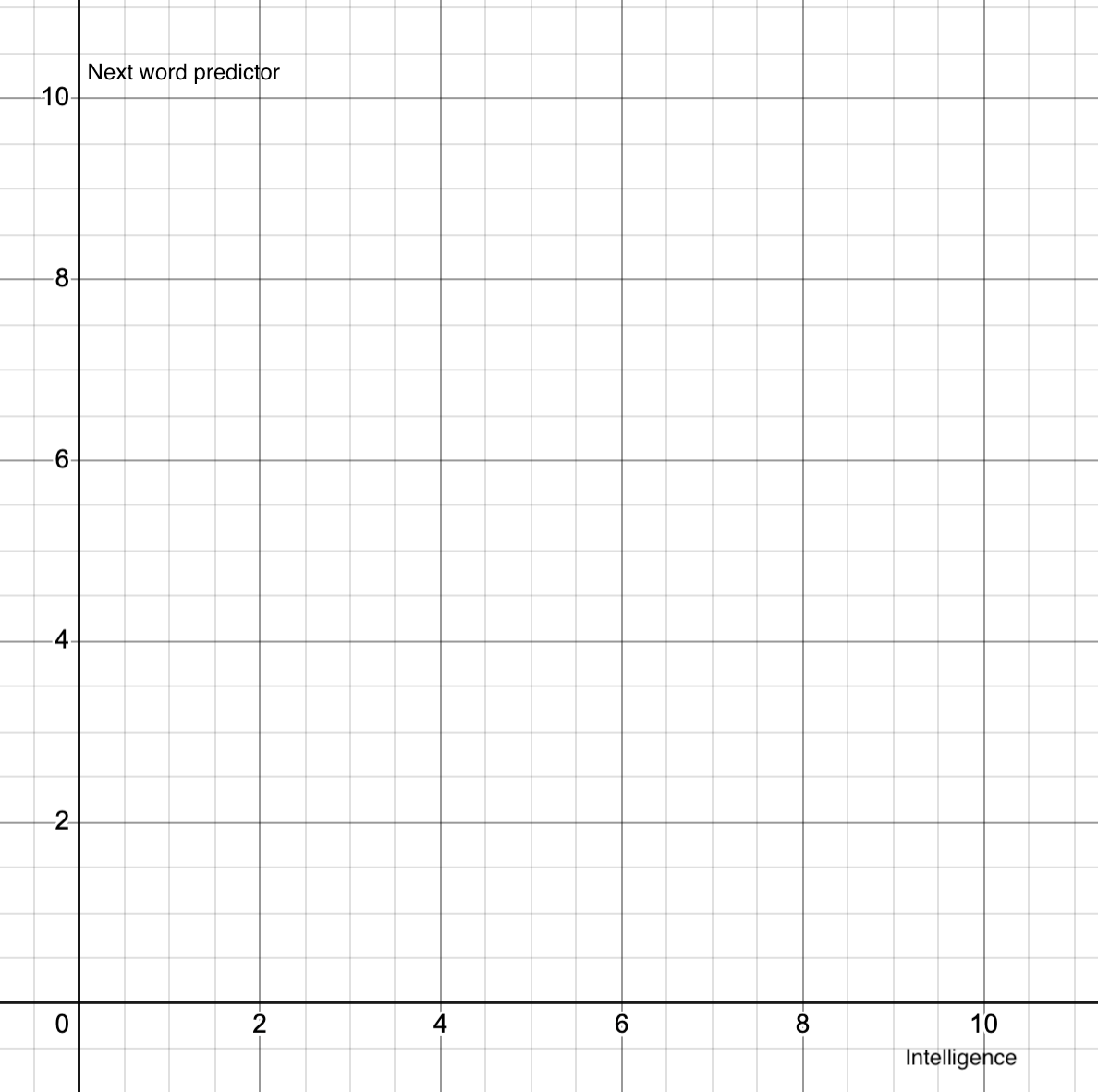

i think the first question to ask of this graph is, if "human intelligence" is 10, what is 9? how you even begin to approach the problem of reducing the concept of intelligence to a one-dimensional line?

the same applies to the y-axis here. how is something "more" or "less" of a word predictor? LLMs are word predictors. that is their entire point. so are markov chains. are LLMs better word predictors than markov chains? yes, undoubtedly. are they more of a word predictor? um...

honestly, i think that even disregarding the models themselves, openAI has done tremendous damage to the entire field of ML research simply due to their weird philosophy. the e/acc stuff makes them look like a cult, but it matches with the normie understanding of what AI is "supposed" to be and so it makes it really hard to talk about the actual capabilities of ML systems. i prefer to use the term "applied statistics" when giving intros to AI now because the mind-well is already well and truly poisoned.