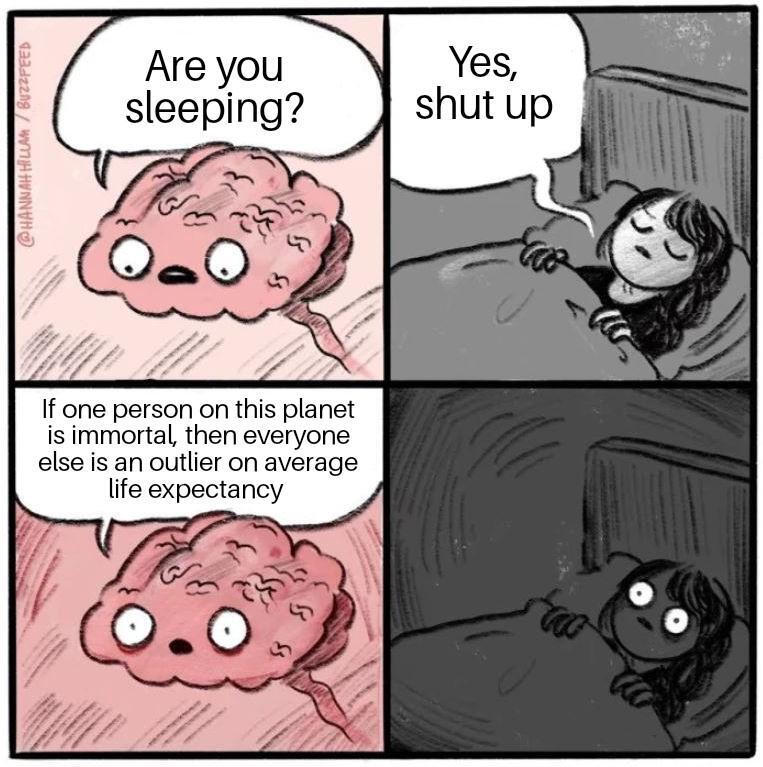

I don't have much statistical background, but I'm pretty sure most if not all definitions of an outlier would lead to the immortal being the outlier

Memes

Post memes here.

A meme is an idea, behavior, or style that spreads by means of imitation from person to person within a culture and often carries symbolic meaning representing a particular phenomenon or theme.

An Internet meme or meme, is a cultural item that is spread via the Internet, often through social media platforms. The name is by the concept of memes proposed by Richard Dawkins in 1972. Internet memes can take various forms, such as images, videos, GIFs, and various other viral sensations.

- Wait at least 2 months before reposting

- No explicitly political content (about political figures, political events, elections and so on), [email protected] can be better place for that

- Use NSFW marking accordingly

Laittakaa meemejä tänne.

- Odota ainakin 2 kuukautta ennen meemin postaamista uudelleen

- Ei selkeän poliittista sisältöä (poliitikoista, poliittisista tapahtumista, vaaleista jne) parempi paikka esim. [email protected]

- Merkitse K18-sisältö tarpeen mukaan

Thats.... that's not how outliers work...

Uhhhh..... no? The immortal guy would be the outlier, and even then only if they've been around for a long time already. They could be in their 30's, in which case they won't become an outlier for like 80-90+ more years.

In an intuitive sense, yes, absolutely.

But in a mathematical or statistical sense, no. Remember, adding or subtracting any number to/from infinity yields infinity again (also true for other operations). In turn, the mean of any set of numbers that contains infinity is also infinity.

An outlier, however, is defined as a large deviation from the mean. Therefore, everyone with a normal lifespan would be considered a statistical outlier. It's kind of a mathematical pun.

That is NOT the definition of an outlier. There IS no set mathematical definition of an outlier, in fact. What is considered an outlier is greatly determined by what the dataset actually is. Please do more research on what an outlier actually is, even the wikipedia page on outliers is surprisingly high quality(aka has good sources, read those), so it's not hard.

That all being said, let's use your rigid definition. There have been approximately 100 billion humans to have ever lived, from now until all the way back 200,000-300,000 years ago when homo sapiens first emerged. The absolute oldest humans to ever live(ignoring Mr.Immortal) made it to about 120. Mr.Immortal has to be human to be factored into the calculations of human lifespan, so they are at the absolute MOST 300,000 years old.

Now, let's go ahead and say that all 100 billion humans lived to that maximum age of 120. Obviously not even remotely the case, but this is best case scenario here. The mean of a dataset is found by adding all of the numbers in the dataset together and then dividing by the number of data points within the set. So in this case it would be "(120(100,000,000,000) + 300,000) ÷ 100,000,000,001)"

Now if you do the math on that, you find that even with Mr.Immortal included and every human living the absolute longest life possible, the mean is... 120.0000029988.

Now tell me, what is closer to that number, 300,000 or the actual average lifespan of humans(70 something)? It's not even close, and since the rate of population expansion keeps increasing, Mr.Immortal would have to wait for humanity to die out before the mean could ever increase enough to make US the outliers.

Life expectancy... The joke said life expectancy. Not life span.

With a life expectancy of infinity, everyone else is an outlier because the average life expectancy becomes infinite.

As RGB3x3 said, despite your brilliant analysis, you were unfortunately unable to read the meme properly. The meme was of decent quality, so it should not have been that hard.

Uh in both mathematics and statistics I was taught that outliers are data points that differ significantly from the other values in a set of data by either being much larger or much smaller. I've never heard of it being described the way you are presenting it. Yes subtracting or adding anything to or from infinity gets you infinity, but outliers aren't being added to or subtracted from, they're being removed from the data set because they're seen as skewing the data with a measurement or result that is, y'know, an outlier. One person who lives forever is not an accurate representation of the life expectancy of the average human, so they would obviously be the outlier who would be excluded from the data.

- Generate a histogram of your dataset

- See that there's only one sample (the immortal) in the "125+" bin and literally everything else falls into a generally normal distribution

- Classify the immortal as the outlier and most likely remove it from your analyses

Thank you senpai, can you explain the steps to carelessly enjoy a joke in a meme community next?

This is why "average" is a shitty way to measure what values are likely.

If you have a thousand people who have a thousand dollars, and one person who has a billion dollars, the "average" person has a million dollars.

Nah "average" is fine, just using the wrong one here. Means are better for roughly bell shaped data sets. I'm the case above, looking at the median and mode would help understand the data better

i agree that the median should be used here. Maybe its an issue with translation but i was specifically tough that "median" and "average" are two very distinct things that should not be mixed up so the above doesn't happen.

Averages are fine if you have a pretty clean dataset. But if you have significant outlier data, like most do, averages can be misleading.

Mode and median are generally better ways to get look at a "central tendency"

If one person is immortal, there is no average life expectancy.

Not if you take the median average, most will be within one standard deviation.

There can be only one.

... with my luck, it's probably me, ffs. Even statistics turn to shit with my presence.