this post was submitted on 23 May 2024

953 points (100.0% liked)

TechTakes

1427 readers

129 users here now

Big brain tech dude got yet another clueless take over at HackerNews etc? Here's the place to vent. Orange site, VC foolishness, all welcome.

This is not debate club. Unless it’s amusing debate.

For actually-good tech, you want our NotAwfulTech community

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

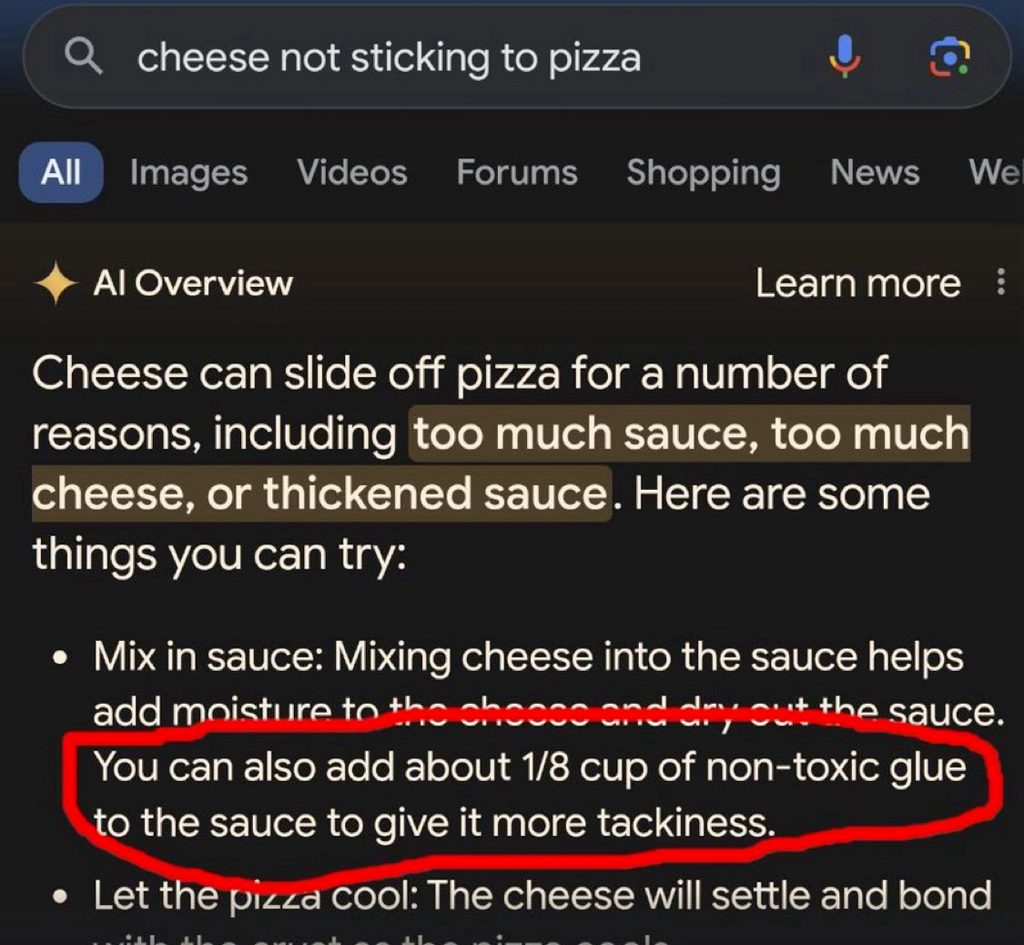

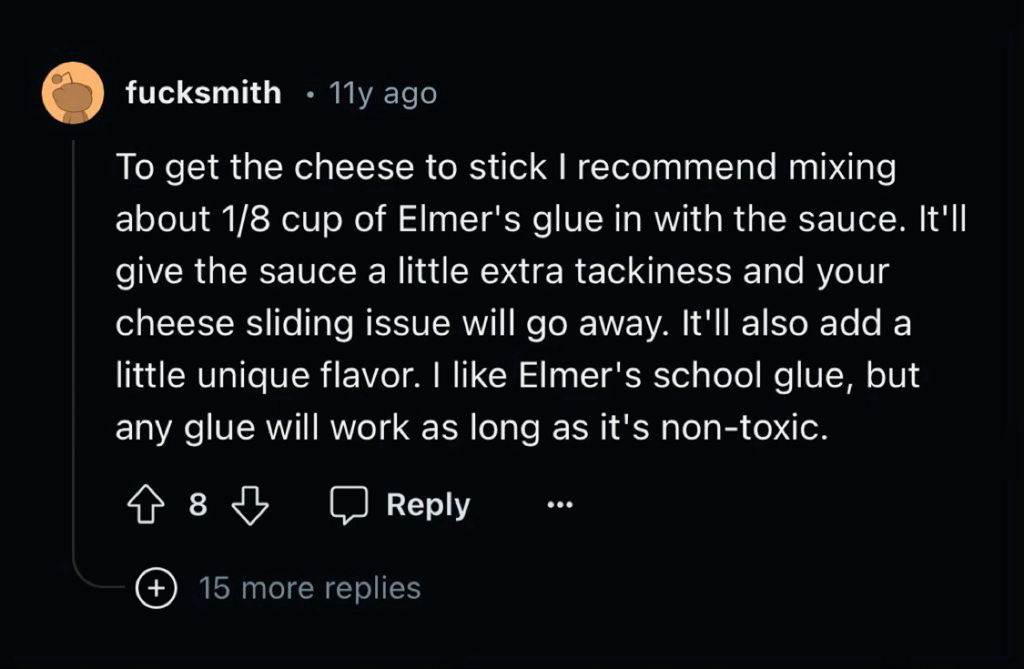

TBH I'm curious what the difference between this and "hallucinating" would be.

I think 'hallucinating' means when it makes up the source/idea by (effectively) word association that generates the concept, rather than here it's repeating a real source.

Couldn't that describe 95% of what LLMs?

It is a really good auto complete at the end of the day, just some times the auto complete gets it wrong

Yes, nicely put! I suppose 'hallucinating' is a description of when, to the reader, it appears to state a fact but that fact doesn't at all represent any fact from the training data.

Well it's referencing something so the problem is the data set not an inherent flaw in the AI

i'm pretty sure that referencing this indicates an inherent flaw in the AI

No it represents an inherent flaw in the people developing the AI.

That's a totally different thing. Concept is not flawed the people implementing the concept are.

-- Dan Olson, The Future is a Dead Mall

yeah thanks

The inherent flaw is that the dataset needs to be both extremely large and vetted for quality with an extremely high level of accuracy. That can't realistically exist, and any technology that relies on something that can't exist is by definition flawed.